No audio available for this content.

By James L. Farrell

Concerns raised about cascaded Kalman filters for loose coupling and/or usage of input data “massaged” in unknown ways are not new, but are routinely excused by requirements to use coordinates from receivers not providing measurement outputs. Often, however, a receiver’s internal 8-state extended Kalman filter (EKF) is not fed with precise carrier phase data — and even when it is, its velocity outputs (being both filtered and unaided) have limited ability to follow high dynamics. Velocity pseudomeasurements under those conditions interfere with IMU aiding.

The extent of reduction in capability of course depends upon the equipment (widely varying and beyond reach of the user) and upon the scenario. Not only flight paths but any trajectory with sharp changes in speed or direction are affected. Twisting, jerking, and winding motions actually experienced can be reported as having reduced severity, and attitude history will suffer further inaccuracy. A demand to accommodate loose coupling is then best satisfied by pseudomeasurements in position only.

This is not an attempt to coax an entire industry into abandoning a very popular choice for satnav/inertial measurement unit (IMU) integration. By “what’s wrong with it” I mean how it’s often done. Believe it or not, there’s a fundamental self-defeating trait in current practice.

Admittedly, I gave short-shrift to loose coupling in my 2007 book GNSS Aided Navigation & Tracking; all flight data processing results in it were for tight coupling with carrier phase (actually, 1-second changes in phase) included. Some years ago, though, I reran segments from that flight, including takeoff and another segment containing a 180-degree turn, with only latitude/longitude/altitude (LLH) pseudomeasurements and no carrier-phase information. Not surprisingly, it provided accuracy commensurate with quality of the LLH input (how could it not?). With heading info added, the velocity errors (peak transients of a few meters/second near start and end of the turn; otherwise smaller) and leveling accuracies (a few mrad) were likewise commensurate with input quality.

I never bothered to publish that; the world doesn’t need more testimony for ability to convey data obtained from a receiver with satellite visibility favorable throughout.

I avoided, however, using pseudomeasurements of velocity. Precisely therein lies the target of this critique: velocity from a receiver’s internal 8-state EKF, fed only from position-dependent measurements in the form of pseudoranges. More broadly, this focuses attention on receivers wherein carrier-based information is either unused (immediately below), imprecise (for example, by using deltarange or cutting corners in other ways), or filtered (thus correcting with averaged past, rather than near-instantaneous, derivative data).

First, velocity observables derived exclusively from the same inputs providing position create a glaring violation of independence — but there’s also a bigger issue: Velocity pseudomeasurements with that scheme constitute a basic contradiction of inertial aiding. A main purpose of the IMU is to reveal dynamics with promptness that data derived from pseudorange histories can’t match. Allow me to review some fundamentals here.

At UCLA more than a half-century ago, I taught undergraduate lab experiments. One illustrated under-/over-/critically damped response, a concept so familiar that no math is needed to explain it. Any application will suffice; that experiment involved control of a motor shaft position. A simple transfer function applied to the position feedback signal determined the damping. With all feedback derived from position, either critical or slight underdamping was de rigeur.

Addition of rate aiding (for that experiment, a tachometer) dramatically improved response without degrading accuracy. The obvious reason: it was no longer a choice between responsiveness versus accuracy. Both are available when an independent rate sensor accompanies the position indicator.

Now, consider redesigning that controller’s rate portion of the feedback signal, giving dominance to sequential changes in position. Unless both highly precise and independent, that would curtail the benefit (that is, improved response to dynamic change) of adding measured rate. Degradation would also arise from giving dominance to a more crudely approximate and/or heavily filtered indication of rate.

There are differences between that example and satnav/IMU integration (for example, estimation versus control; time-varying versus constant gains; and so on) but the principle remains applicable. When derived rate from that 8-state Kalman filter is used to correct (thus overrule) the velocity history, the responsiveness to dynamics offered by the IMU is being undermined by a process that’s beyond reach. The system’s position and velocity then draws nearer to the output of an unaided (standalone) receiver.

The practice raises various questions:

- Is that an integrated approach worthy of the name? Or doesn’t the IMU just derive attitude adjustments by riding piggy-back — thereby taking (velocity history from an unaided receiver) without giving (unimpeded improvements in response to dynamics, as expected from inertial aiding)?

- How good is that system’s accuracy (not in position; in velocity and in leveling — and not from simulation; from flight data with dynamics)?

- If LLH data were replaced by pseudoranges for tight integration, would velocity pseudomeasurements still be used, to give coupling tight for position but loose for velocity?

(I hope not.) - Since velocity pseudomeasurements are unnecessary in tight integration without carrier phase data, then why use them with LLH?

I’ll turn that last item into a recommendation for satnav/IMU suppliers hoping to compete successfully: If you must include a loosely coupled mode to accommodate LLH-cum-velocity data from a receiver’s 8-state EKF, don’t use receiver velocities as observables. Your system outputs will evolve without them.

Appropriate design is required (you’ll have to do more than just disconnect the velocity inputs) but, given that, all information will be extracted from the IMU and LLH data — with inertial aiding in high dynamics unobstructed by superfluous (8-state-derived) velocity data. Accuracy will improve in not only velocity but also attitude — from simpler software.

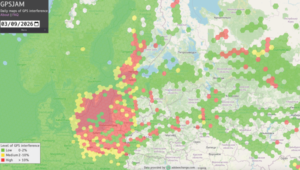

An objection might be raised, noting fair performance when exploiting the full 8-state information if dynamics are always mild. To that I would answer: Is there no limit to how much performance will be sacrificed just to accommodate expediency? Loose coupling already forfeits robustness. Let’s not compound that by surrendering dynamics as well. All of us realize the large, and growing, array of obstacles disrupting successful operation. Why design only for benign conditions? Approaches taking advantage of advances beyond exploiting separate pseudoranges (usage of precise carrier phase, ultratight coupling, FFT-based deep integration) remain ever more in the minority, despite myriad threats to GNSS.

This discussion has concentrated on unnecessary limitations of loosely coupled GNSS/INS integration performance as commonly practiced. Similar problems in systems with tighter integration are less prevalent but still not uncommon (for example, inertial instrument error modeling is still not widely understood, and attitude accuracy reported from many sources doesn’t reach achievable levels. Those familiar with my writings are aware of various changes I would advocate, not limited to inertial or satellite navigation. Those and other issues will be left to another time.

James L. Farrell worked for 31 years at Westinghouse in design, simulation, and validation of navigation and tracking programs. He teaches and consults for private industry, the Department of Defense, and university research through Vigil, Inc.

Thomas H. Kerr III

Hi Jim,

Wow! This was a great discussion on this tricky subject.

Thanks for the insights.

Best regards,

Tom

Paul McBurney

Hi Dr. Jim,

Glad to see such discussions. I fully agree with your conclusion about using a velocity solution based mainly on position information as the lag is clearly driven by the process noise model of the position states.

I think the good news is that most current GNSS modules generate the velocity states mainly from the carrier Doppler observations. The coupling to position is weak and thus, the velocity estimates from the typical 8 states EKF will have very predictable lag. If the cross terms between pos/vel of the 4×4 EKF were examined, they would be small compared to the 4×4 velocity section of the EKF. Moreover, in your example, we would probably see that the 4×4 cross terms were large when there are no higher order measurements to drive the velocity estimation.

The carrier Doppler measurements that drive the velocity are quite accurate at their time tag. Most manufactures will provide one Hz or better, with 5 Hz and 10Hz commonly available.

The measurement is the average of carrier loop updates which are generally 50Hz or better.

With respect to your discussion, the average Doppler error will be dominated by the averaging time.

Thus, as long as one adds a term to the average Doppler measurement noise model to account for the effect of worst case acceleration (such as the vehicle worst case banking or diving), then velocity should actually HELP the integrated INS velocity during maneuvers rather than hurt it as in the case in your note.

The two important facts to make this work right in real life are:

1) The velocity KF only updates when there are enough GNSS measurement available with good GDOP. If the KF velocity is update without this condition, worst case with 3 or fewer measurements, then the velocity lag will be modeled and can cause problems as in Jim’s note. A good OEM module will allow provide an option for integrators to force the velocity to only compute when there are enough concurrent average Dopplers measurements.

2) The INS blends the velocity solution at the actually KF velocity measurement time and not when the measurements are made available. In the 1Hz case, then the lag between the actual meas time and the processing time can be 1/2 second or more. The time for the receiver to compute the fix is generally less than 1/2 second and closer to 100msec. So a 600msec lag can be seen. Once again, it might be possible to configure the OEM GNSS module to report the fix time as the original measurement time and not the output time.

Herein lies the greatest challenge in blending the GNSS’s 8state EKF with the INS. If the manufacturer doesn’t allow configuration of the measurement characteristics, then its hard to get the actual velocity time tag. The receiver will generally propagate the fix to the output time, typically by the 600msec mentioned above. They generally don’t provide the earlier original meas time tag, even though that is the correct time tag for the velocity.

But a good solution is to generate a predicted correct time tag as 1/2 the measurement update rate plus a 100-200msec of calculation latency. Then the velocity can be blended with the similar INS measurements.

A viola, suddenly the cascaded GNSS, INS issue can be managed to provide good and reliable velocity at all phases of the vehicle operation.

Thanks for the venue to discuss the subject.