No audio available for this content.

Personal Nav and LBS

To enrich user experience of location-based services and personal navigation, three-dimensional models such as those used in urban planning are added to a smartphone platform, without the requirement of additional hardware.

Most current map applications for smartphones and other devices providing location-based services (LBS) are based on two-dimensional maps. Three-dimensional (3D) city models are widely used in applications such as engineering design, environmental modeling, and urban planning. Adapting such models for use in smartphones would make it possible to render 3D scenes in real time, enriching contents and user experience for personal navigation and LBS. A delimited yet large-scale event such as the upcoming 2010 World Exposition in Shanghai provides a promising area for system development and testing.

3D visualization consumes a large amount of computing power, and most of the current successful applications run in a PC environment, as does the Google Earth 3D application. It is still a very challenging task to implement 3D visualization in an embedded system such as a smartphone.

This article presents an entire 3D personal navigation system based on a smartphone platform, the Nokia S60 platform. The study covers the following aspects:

- 3D personal navigation and LBS service in a smartphone

- 3D city modelling, and

- multi-sensor positioning.

The objectives of the work include prototyping an entire handset-based 3D personal navigation and LBS system utilizing WLAN/Bluetooth positioning technologies, handset built-in GPS/AGPS, and 3D modeling and visualization (basic demonstration scenario), as well as presenting a multi-sensor positioning (MSP) platform in addition to the handset software (advanced demonstration scenario).

3D Personal Navigation and LBS

No additional hardware is added to the Nokia Series 60 (S60) smartphone platform to achieve the 3D visualizations or other functions in the software. Figure 1 demonstrates the functionalities and features available in the 3D viewing of the LBS software. Figure 2 shows the general architecture of the software.

The software development work focuses on the UI layer, framework layer, and component layer. The software mainly includes the following components:

- the 3D visualization engine based on OpenGL ES,

- the route plan component,

- the locator component,

- the LBS client component, and

- UI and framework.

Most of the challenging tasks are included in the development of the elements in the component layer, especially in the development of the 3D visualization engine based on the OpenGL ES API that is available from the S60 platform SDK (Software Development Kit). The high-level 3D visualization engine architecture covers the interface layer, the core engine layer, and the data management layer. The first one is responsible for cross-component functional communication, request handling, and data exchange. It provides users with the 3D scene visualization functionalities to access the core engine layer via a single class called NaviSceneControl, which includes all the operations of the 3D visualization: scene zooming, view angle rotating, scene and cursor moving, and selecting route planning and virtual navigation.

The core engine layer takes care of the 3D scene visualization computation and model object management. To enable the 3D visualization for a large region, the objects in the scene are classified into two categories in this layer. One is the 3D models like buildings, trees and poles, while the other is texture of land surface, which consist of ortho-rectified digital aerial photos. All the objects are processed as tiles according to the incoming parameters from the interface layer. Therefore only a small subset is loaded dynamically instead of the whole data.

The data management layer accesses the 3D models and ground-texture images persistent on the flash disk of the mobile phone through an independent thread. To reduce the data size of the 3D models, the original .3ds file created from 3D Max Studio software is compressed to fulfill the requirements of the mobile device.

A simple route plan component is implemented in the software to enable to the user to find and view the route to his or her destination. In order to be able to show the entire route, the calculated route will be displayed on top of a 3D view with a downward camera at a high altitude. The 3D scene in this case looks like an orthoimage. An orthoimage shows objects in the perpendicular view to the projection plane of the objects.

The locator component aggregates the positioning information either from the built-in positioning sensors in the smartphone, a GPS receiver, and a WLAN (Wireless Local Area Network) or a Bluetooth chip, or any external positioning device, such as also the multi-sensor positioning (MSP) device developed in this project. It forwards the positioning information including the location and heading information to the route plan component and the 3D visualization engine to accomplish the navigation functions.

The purpose of the LBS client component in the handset software is to access the LBS server.

Figure 3 shows the overview of the mechanism for delivering the location-based services. The services are classified into two categories: the static services and the dynamic services. The static services include those services that are not changing in time. For example, POIs (points of interest) belong to this category of service. The static services are stored in a database that can be downloaded from the Internet by the users in advance. The users can store the database in the memory card of the phone before running the 3D personal navigation and LBS software. With this approach, it saves the data transmission fee for the end-users when accessing the LBS. The dynamic services cover those services that change in time. For example, a piece of real-time news is one of the typical dynamic LBS. For accessing the dynamic LBS, the Really Simple Syndication (RSS) technology is adapted in our implementation.

The LBS client component is implemented so that the handset will pull automatically the news in the background in real time via a widget reader embedded in the LBS client component. Whenever new information is uploaded to the LBS server or to the registered web pages, mobile users will be notified.

In addition to RSS technology, another approach to broadcast LBS information is considered in the system: to disseminate the LBS information via an SBAS (satellite-based augmentation system) pseudolite. The dynamic LBS information (e.g., a short message) can be first encoded into a user-defined SBAS message. The message encoded is then sent to a pseudolite from which the message is broadcast. The corresponding SBAS message can, in fact, be received by any SBAS-enabled receiver located within radio coverage area of the pseudolite. However, the encoded LBS message can be decoded only with the receiver that has a special firmware, developed in this case by the Finnish Geodetic Institute (FGI). Having received and decoded the LBS messages transmitted from the pseudolite with a dedicated receiver, for example the MSP device part of the more advanced demonstration scenario of the project, the content of the message is then encoded to a user-defined NMEA (National Marine Electronics Association) message and transmitted to a mobile phone in the vicinity via a Bluetooth connection as shown in Figure 3. This solution of LBS data distribution is available only to a very limited number of users with receivers carting a special firmware developed by FGI.

3D City Modeling

Due to the memory limitations of a mobile phone, there are certain requirements for the 3D models applied. In our study, a test scene for model reconstruction is focused on a street in Espoo, Finland, in an ordinary residential area. A vehicle-borne mobile mapping system ROAMER (see photo) developed by FGI performed the data acquisition. It consists of a carrying platform, a positioning and navigation system, and a 3D laser scanner system. With the ROAMER system, visible objects can be measured with an accuracy of a few decimeters with a maximum vehicle speed of 50–60 km/hour, and the data for the desired objects can be collected within the range of several tens of meters.

A large amount of data is produced from the system, and noise and outlier points are needed to be removed. Valid data is classified into different point groups using an automatic algorithm developed by FGI. These point groups include buildings, trees, roads, and poles. Models are then reconstructed based on these classified point groups.

Modeling methods are developed to meet the application requirements of personal navigation: small model size, high accuracy, and good visual appearance. Small model size is achieved by simplified object geometry and reduced texture resolution. Model accuracy is controled by extracting building outlines from a classified point cloud and overlapping with the final 3D model. The model completeness is checked by comparing the resulting model with original images. Good visual effect is realized by applying photo-realistic texture. Photo-realistic texture provides rich information for the 3D scene reconstructed. Figure 4 presents the total process of the 3D modeling, in which only the individual object texture and the final model constructions require manual editing. Figure 5 shows the raw data retrieved and Figure 6 presents the final 3D models of the test area.

To import the final 3D models to a mobile phone, the size of an individual model is restricted to less than 100 kb. To optimize model size, a row of buildings is divided into several building blocks.

Multi-Sensor Positioning

As long as open-sky satellite-signal conditions are available, there are no problems to locate a mobile user with the built-in GPS receiver of a smartphone with a positioning accuracy of a few meters. However, most popular location-based services occur in GNSS-degraded environments such as in indoor environments and urban canyons. Locating a mobile user seamlessly any time anywhere under any circumstance is still a very challenging task, especially to implement such an indoor/outdoor positioning solution in a digital signal processor (DSP) platform.

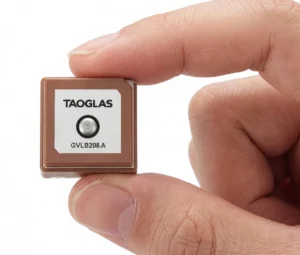

FGI is now developing a DSP-based multi-sensor positioning platform to approach a seamless indoor/outdoor locating solution. The platform consists of a GPS module, a 3D accelerometer, and a 2D digital compass (Figure 7). A DSP is embedded in the GPS module. All sensors are integrated to the DSP that hosts a core software for real-time sensor data acquisition and real-time processing to estimate user’s location.

The multi-sensor platform provides opportunities to investigate the positioning solutions with a GPS/Reduced-INS (Inertial Navigation System) combination or GPS/PDR (Pedestrian Dead Reckoning) combination. The Reduced-INS combination is defined as a combination of a 3D accelerometer and a 2D digital compass, and is a very low-cost approach of sensor augmentation. The GPS/Reduced-INS implementation is implemented in a loosely coupled Kalman filter, while the GPS/PDR algorithm is based on pedestrian-targeted dead reckoning, with heading error and step length estimation methodology.

Preliminary tests analyzing both GPS/Reduced-INS and GPS/PDR solutions have been carried out in a sports field on a 400-meter running track. In order to simulate a GPS outage situation, the GPS measurements were ignored for one minute. During this one minute “outage,” the traveling trajectories are estimated with the Reduced-INS solution and the PDR solution. Figure 8 shows the trajectory of the Reduced-INS solution, while Figure 9 shows that of the PDR solution.

The Reduced-INS approach provides a reasonable result with a positioning accuracy of about 20 meters at the end of the forced 1-minute GPS outage. The PDR approach provides a better prediction in this case, resulting in only a couple of meters of error after the 1-minute outage of absolute location input from the GPS, because the heading errors are modeled carefully utilizing previous training with data from a previous run along the same track as well as accurate step detection estimation.

Conclusions

The prototype system will be tested and demonstrated at the 2010 World Expo in Shanghai, implemented with a smartphone software package: anyone with a Nokia phone (S60 with built-in GPS and WLAN/BT) can experience the 3D personal navigation and LBS service in the Expo area by downloading and installing the 3D models. The prototype has so far met these challenges: the high performance required of real-time 3D visualization in a smartphone; high positioning availability with acceptable accuracy in indoor and outdoor environments; and the demanding requirements of the 3D models for a small phone, including small model size, high accuracy, and good visual appearance.

Manufacturers

The multi-sensor positioning platform consists of a Fastrax iTrax03 GPS module, a VTI SCA3000-D1 3D accelerometer, and a Honeywell HMC6352 2D digital compass. The ROAMER mobile mapping system consists of a Faro LS 880HE80 terrestrial laser scanner, two AVT Oscar F-810C cameras, and a NovAtel SPAN geo-reference system.

RUIZHI CHEN is a professor and head of the Department of Navigation and Positioning at the Finnish Geodetic Institute, where Heidi Kuusniemi is a specialist research scientist, Juha Hyyppä is a professor and head of the Department of Remote Sensing and Photogrammetry, Risto Kuittinen is director general, Yuwei Chen is a specialist research scientist, and Ling Pei, Lingli Zhu and Jingbin Liu are senior research scientists.

JIXIAN ZHANG is a professor and president of the Chinese Academy of Surveying and Mapping, where Yan Qin and Zhengjun Liu also work as the director of the Department of Research and Development and the group leader in the Institute of Photogrammetry and Remote Sensing, respectively.

JARMO TAKALA is a professor and head of the Department of Computer Systems at Tampere University of Technology in Finland, where Helena Leppäkoski is a researcher.

JIANYU WANG is a professor at the Shanghai Institute of Technical Physics, Chinese Academy of Sciences.