No audio available for this content.

A new method of imaging that harnesses artificial intelligence to turn time into visions of 3D space could help cars, mobile devices and health monitors develop 360-degree awareness.

Photos and videos are usually produced by capturing photons with digital sensors. 3D images can be generated either by positioning two or more cameras around the subject to photograph it from multiple angles, or by using streams of photons to scan the scene and reconstruct it in three dimensions. Either way, an image is only built if spatial information of the scene is gathered.

Now, researchers based in the United Kingdom, Italy and the Netherlands describe how they have found an entirely new way to make animated 3D images — by capturing temporal information about photons instead of their spatial coordinates. The team’s paper, “Spatial images from temporal data,” was published in Optica.

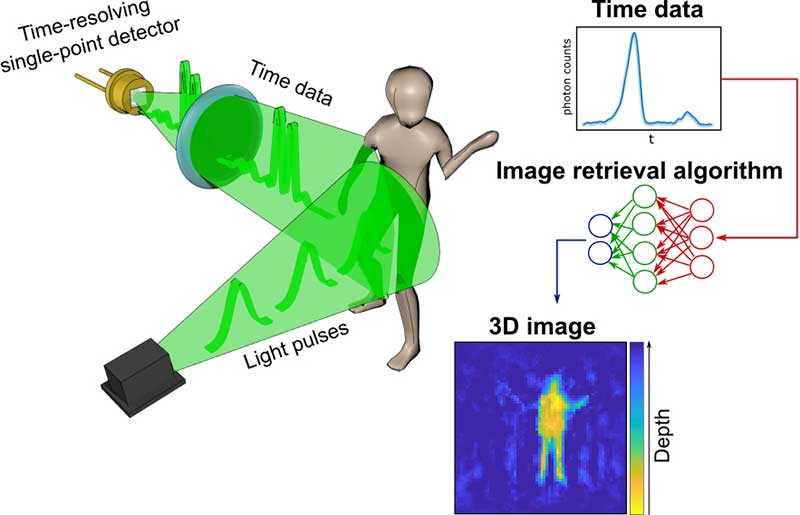

Their process begins with a simple, inexpensive single-point detector tuned to act as a kind of stopwatch for photons. Unlike cameras, which measure the spatial distribution of color and intensity, the detector only records how long it takes the photons produced by the split-second flash of a pulse of laser light to bounce off each object in any given scene and reach the sensor. The farther away an object is, the longer it will take each reflected photon to reach the sensor.

The information about the timings of each photon reflected in the scene — temporal data — is collected in a simple histogram. Those graphs are then turned into a 3D image using a sophisticated neural network algorithm. The researchers “trained” the algorithm by showing it thousands of conventional photos of the team moving and carrying objects around the lab, alongside temporal data captured by the single-point detector at the same time. Eventually, the network learned enough about how the temporal data corresponded with the photos that it was capable of creating highly accurate images from the temporal data alone.

In the proof-of-principle experiments, the team managed to construct moving images at about 10 frames per second from the temporal data, although the hardware and algorithm used has the potential to produce thousands of images per second.

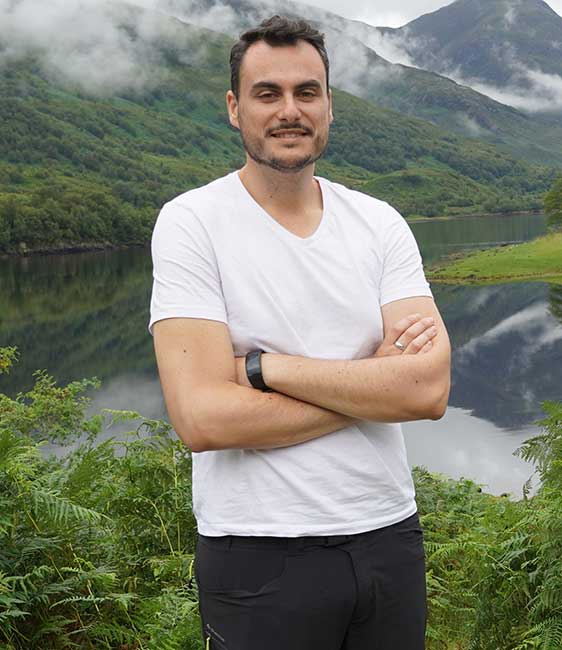

Alex Turpin, a Lord Kelvin Adam Smith Fellow in Data Science at the University of Glasgow’s School of Computing Science, led the university research team with Prof. Daniele Faccio and support from colleagues at the Polytechnic University of Milan and Delft University of Technology.

“Cameras in our cellphones form an image by using millions of pixels,” explained Turpin. “Creating images with a single pixel alone is impossible if we only consider spatial information, as a single-point detector has none. However, such a detector can still provide valuable information about time. What we’ve managed to do is find a new way to turn one-dimensional data — a simple measurement of time — into a moving image that represents the three dimensions of space in any given scene.”

The approach is capable of decoupling light altogether from the image-capture process, and the paper discusses how the team managed to use radar waves for the same purpose. “We’re confident that the method can be adapted to any system which is capable of probing a scene with short pulses and precisely measuring the return ‘echo.’”

Right now, the neural net’s ability to create images is limited to what it has been trained to pick out from the temporal data of scenes created by the researchers. But with further training and by using more advanced algorithms, it could learn to visualize a range of scenes, widening its potential applications in real-world situations.

“The single-point detectors that collect the temporal data are small, light and inexpensive, which means they could be easily added to existing systems like the cameras in autonomous vehicles to increase the accuracy and speed of their pathfinding,” Turpin said. “Alternatively, they could augment existing sensors in mobile devices like the Google Pixel 4, which already has a simple gesture-recognition system based on radar technology. Future generations of our technology might even be used to monitor the rise and fall of a patient’s chest in a hospital to alert staff to changes in their breathing, or to keep track of their movements to ensure their safety in a data-compliant way.”

Next, the team will work on a self-contained, portable system-in-a-box as well as examining options for furthering research with input from commercial partners. The research was funded by the Royal Academy of Engineering, the Alexander von Humboldt Stiftung, the Engineering and Physical Sciences Research Council (ESPRC) and Amazon.

Citation. A. Turpin, G. Musarra, V. Kapitany, F. Tonolini, A. Lyons, I. Starshynov, F. Villa, E. Conca, F. Fioranelli, R. Murray-Smith, and D. Faccio, “Spatial images from temporal data,” Optica 7, 900-905 (2020), https://doi.org/10.1364/OPTICA.392465.